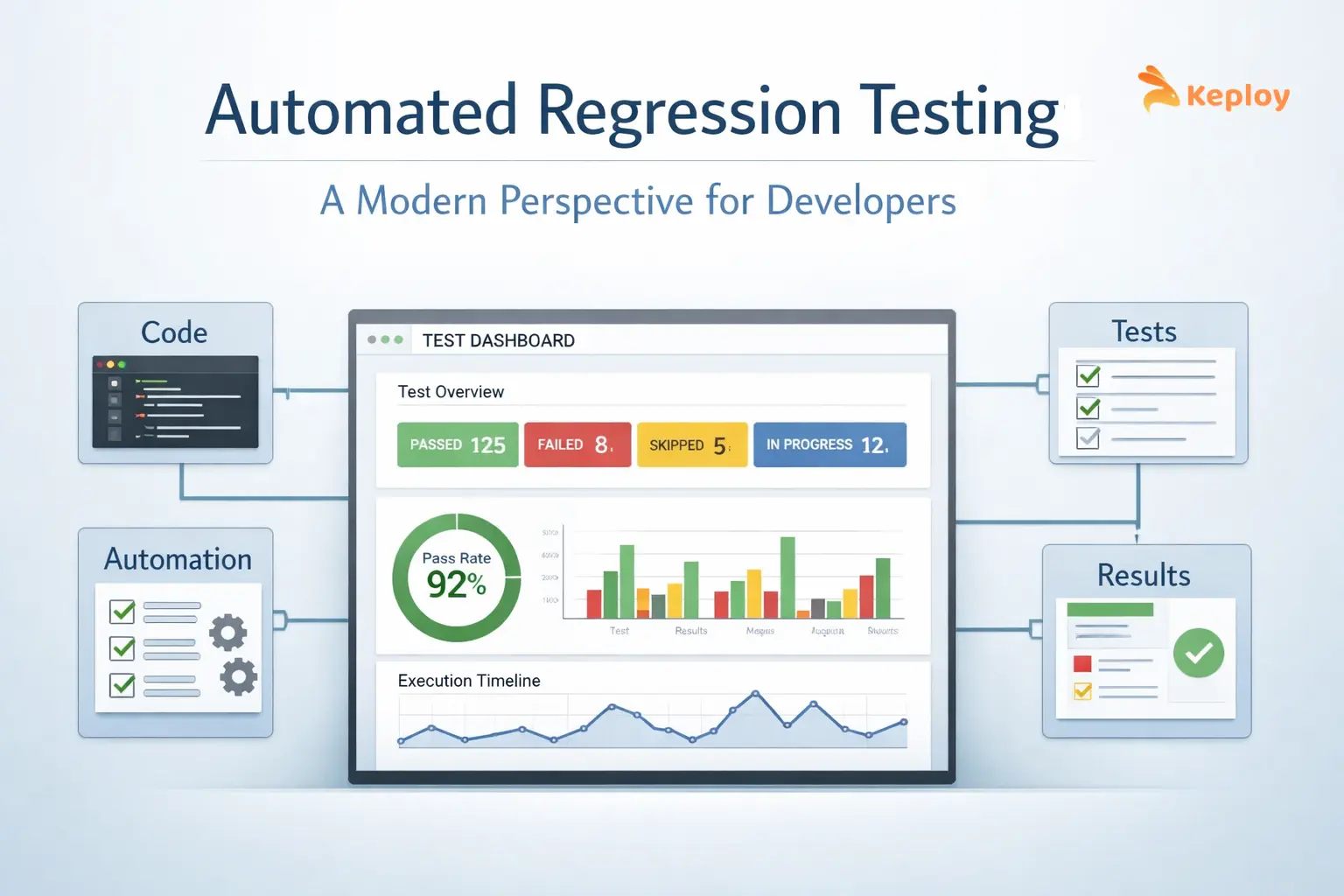

Automated regression testing is no longer just about rerunning test cases after every change. In modern systems, it’s about ensuring that rapid releases, distributed architectures, and constant updates don’t silently break existing functionality.

As teams move faster, the real challenge is not running more tests, but running the right ones efficiently.

What is Automated Regression Testing?

Automated regression testing is the process of using scripts or tools to re-run previously executed test cases automatically to ensure that new changes haven’t introduced unexpected issues.

The core question it answers: did anything break after this change?

Instead of manually validating features every time, teams rely on automation to:

-

Re-run critical test cases

-

Confirm existing functionality holds

-

Surface regressions before they reach production

Modern systems have made this harder than it sounds.

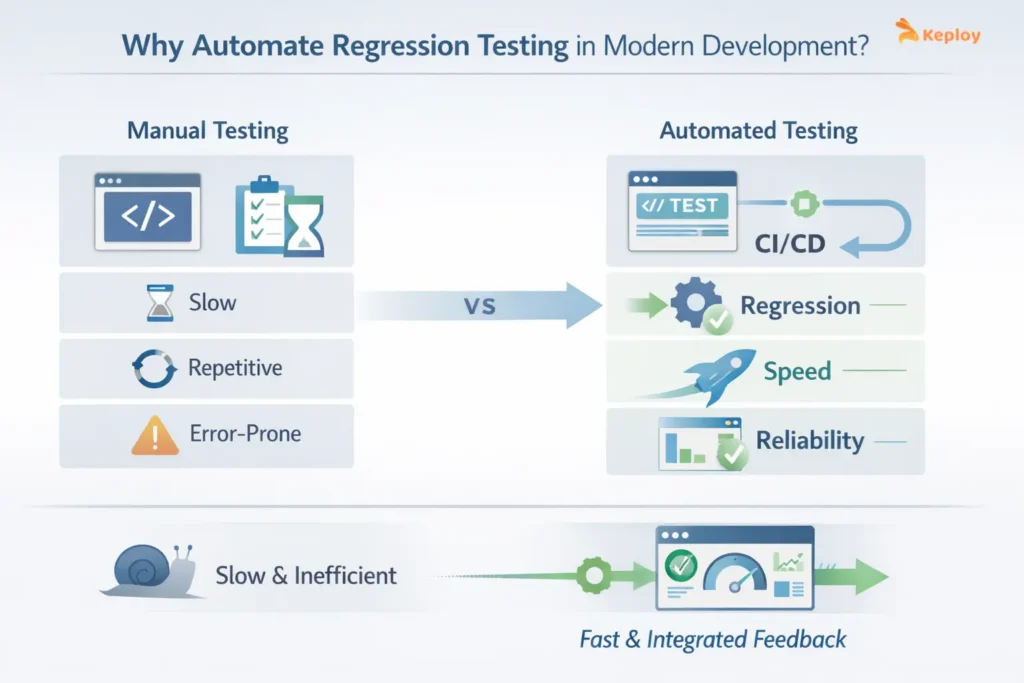

Why Automate Regression Testing in Modern Development?

Speed is the biggest driver. With CI/CD pipelines and frequent deployments, manual regression testing simply cannot keep up.

Automation helps teams:

-

Ship faster without compromising stability

-

Reduce repetitive testing effort

-

Catch regressions early in the pipeline

-

Maintain confidence during frequent releases

Example:

Teams at companies like Uber deal with constant state changes such as driver availability, trip status, and pricing updates, where even small regressions can disrupt core workflows.

Similarly, Stripe operates in a system where API-level changes can impact payment flows, retries, and transaction states, making automated regression testing critical to avoid silent failures.

These aren’t edge cases; regressions are a natural byproduct of continuous change.

Manual vs Automated Regression Testing

Manual regression testing involves testers re-running test cases by hand after every change. For small projects with infrequent releases, it is manageable. For anything running on a CI/CD pipeline, it becomes a bottleneck within weeks.

| Aspect | Manual Regression Testing | Automated Regression Testing |

|---|---|---|

| Speed | Slow, hours to days | Fast, minutes per run |

| Consistency | Prone to human error | Same result every time |

| CI/CD suitability | Not practical | Built for it |

| Maintenance effort | Low setup, high repeat effort | Higher setup, near-zero repeat effort |

| Best suited for | Exploratory, one-off checks | Repetitive, high-frequency validation |

When Should You Automate Regression Testing?

Not everything should be automated. This is where many teams go wrong.

Automation makes sense when:

-

Test cases run frequently

-

Features are stable

-

Scenarios are critical to business workflows

-

Tests need to run across multiple environments

Skip automating:

-

One-time scenarios

-

Rapidly changing features

-

Tests where the expected outcome isn’t clear

Full automation isn’t the goal – the right coverage is.

Types of Regression Testing and When Each Applies

Not all regression testing looks the same. Corrective, selective, retest-all, and progressive regression testing each serve different scenarios, from minor bug fixes to major product releases. Choosing the right type is as important as deciding what to automate.

See the full breakdown: Types of Regression Testing in Software Testing

How to Automate Regression Testing Effectively

Automation is not just about writing scripts. It’s about building a sustainable process.

Defining a Workflow for Regression Testing in DevOps

A typical workflow for regression testing in DevOps looks like:

-

Code commit triggers CI pipeline

-

Automated test suite runs in parallel

-

Failures are reported instantly

-

Fixes are validated quickly

The goal is to keep feedback loops short.

When feedback is slow, developers have already moved on to the next change by the time a broken build surfaces. That’s the real cost – not the delay itself, but the context-switching and re-investigation that follows.

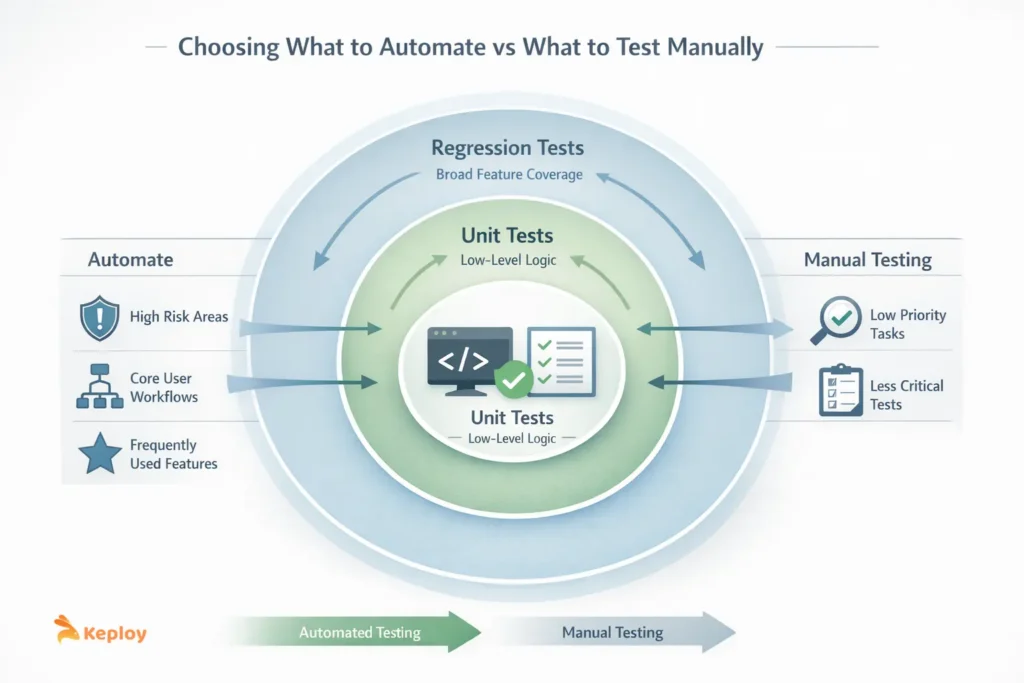

Choosing What to Automate vs What to Test Manually

A common mistake is trying to automate everything.

Instead, teams prioritize:

-

High-risk areas

-

Frequently used features

-

Core user workflows

It also helps to be clear about:

-

What belongs at the unit level

-

What actually needs regression coverage

Overlapping those two layers creates redundant work and bloats pipelines. Our comprehensive guide on Unit testing vs functional testing covers this if you want to dig in.

Steps to Implement Automated Regression Testing

1. Audit existing manual test cases: Go through your current manual tests and identify which ones are stable, repeat frequently, and cover critical workflows. These are your automation candidates.

2. Select a tool that fits your stack: Match the tool to what you are testing. UI-heavy flows need something like Selenium or Playwright. API-heavy systems benefit from tools like Keploy or REST Assured. The tool must integrate cleanly with your CI/CD pipeline.

3. Write scripts for high-priority flows first: Start with the top 20% of test cases that cover 80% of business risk. Keep scripts modular so that individual pieces can be updated without rewriting everything.

4. Plug into your CI/CD pipeline: Configure tests to trigger automatically on every commit or pull request. The goal is zero manual intervention between a code push and a test result.

5. Set measurable thresholds: Define what a healthy suite looks like before problems surface. Track execution time, failure rate, and flaky test percentage from the start. The metrics section below has the recommended thresholds.

6. Review and trim the suite regularly: A test suite that is never pruned becomes a liability. Remove tests tied to deprecated features, update scripts when behavior changes, and keep the suite focused on what still matters.

Automated Regression Testing Tools and Software

Choosing the right regression testing tools for automated regression testing is not just a technical decision, it directly impacts how fast, reliable, and maintainable your testing process will be over time. In growing systems, the wrong tool can slow down pipelines, increase maintenance effort, and reduce confidence in test results.

Different types of tools solve different problems, and no single approach works for every system.

Types of Automated Regression Testing Tools

Common categories include:

UI testing tools for automated regression testing

These tools simulate real user interactions with the interface and are useful for validating front-end workflows. They help catch visual and interaction issues but are often slower and more prone to flakiness.

Examples: Selenium, Cypress, Playwright

API automated regression testing tools

These tools validate backend logic and service-level interactions, making them faster and more stable than UI tests.

Examples: Keploy, Postman, REST Assured

End-to-end automated regression testing tools

These validate complete workflows across multiple components and services.

Examples: Playwright, TestCafe, Cypress, Keploy

AI-driven automated regression testing tools

These tools use machine learning to generate, prioritize, or maintain test cases automatically.

Examples: Mabl, Functionize, Testim

Each category comes with trade-offs in terms of speed, reliability, and maintenance effort.

How to Choose the Right Automated Regression Testing Software?

Instead of chasing trends, evaluate tools based on:

-

Execution speed – Faster tests improve feedback cycles

-

Ease of maintenance – A suite that’s difficult to update becomes a long-term liability.

-

CI/CD integration – It needs to run automatically on every change, not just on demand.

-

Real-world validation – Tools that validate actual system behavior tend to catch more than those relying purely on predefined scripts.

The goal is alignment with your system and workflow—not popularity.

Where Regression Testing Fits?

Once you’re setting up automation, you’ll run into overlap. Different testing types start bleeding into each other, and without clear boundaries, you either:

-

Duplicate work

-

Miss gaps

-

Load pipelines with unnecessary tests

Regression Testing vs Integration Testing

Integration testing checks how different components communicate. Regression testing checks whether existing functionality still works after a change.

| Aspect | Regression Testing | Integration Testing |

|---|---|---|

| Purpose | Ensure existing features still work after changes | Validate interaction between components |

| Focus | Stability over time | Communication between modules |

| When Used | After code changes | During system integration |

| Scope | Broad, repeated checks | Specific interaction points |

Both are necessary, but they solve different problems.

End-to-End Testing vs Regression Testing

End-to-end testing validate complete user workflows. Regression tests check that those workflows keep working after every update.End-to-end tests are:

-

Fewer

-

Broader

-

Slower

Regression tests are:

-

More frequent

-

More targeted

-

Continuous

| Aspect | Regression Testing | End-to-End Testing |

|---|---|---|

| Goal | Ensure nothing breaks after updates | Validate complete workflows |

| Scope | Targeted and frequent | Broad and comprehensive |

| Speed | Faster | Slower |

| Frequency | High | Lower |

Functional vs Regression Testing

Functional testing verifies if a feature works.

-

Functional: “Does it work?”

-

Regression: “Does it still work?”

Key takeaway: Understanding this distinction between functional and regression testing avoids redundant testing.

| Aspect | Functional Testing | Regression Testing |

|---|---|---|

| Purpose | Validate feature behavior | Ensure existing features still work |

| Question | Does it work? | Does it still work? |

| Timing | During development | After changes |

| Role | Initial validation | Continuous validation |

Metrics and KPIs for Automated Regression Tests

Having automation in place is one thing. Knowing whether it’s trustworthy is another. Teams often track test count – which tells you almost nothing useful. Instead, keep a track of the following metrics:

-

Test execution time – Ideally under 15 minutes

-

Test coverage – Focus on 80–90% of critical workflows

-

Failure rate – Keep false positives under 2%

-

Flaky tests – Maintain below 5%

Tracking these metrics with specific thresholds helps teams prioritize effort, identify real issues faster, and maintain confidence in automated regression tests.

At the delivery level, the real proof shows up in DORA’s Change Failure Rate – a sustained drop in this metric is the clearest signal that your regression suite is preventing production failures, not just generating green builds.

Best Practices to Automate Regression Testing Successfully

Teams succeed when they:

-

Keep test suites lean and relevant

-

Prioritize high-risk features

-

Run tests frequently and efficiently

-

Regularly clean up outdated tests

Teams at Stripe and Netflix keep suites lean and focus on high-risk features. Not because they lack resources, but because a bloated test suite is its own kind of problem.

For larger organizations, some teams split the work: regression testing services handle scale while internal teams focus on new features.

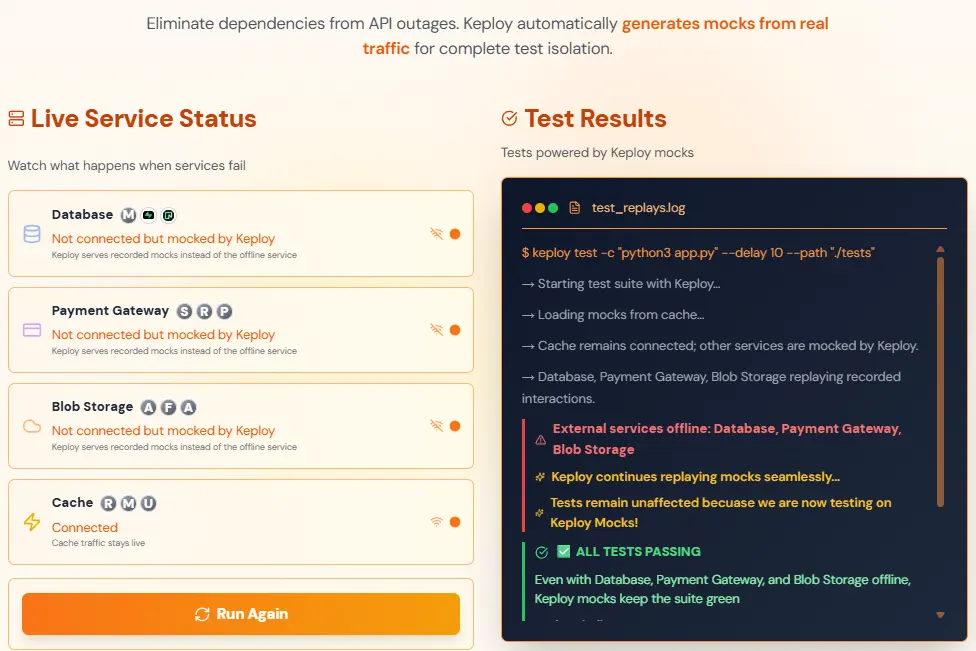

Challenges of Automated Regression Tests in Modern Systems

Common challenges include:

-

Flaky tests → Flaky tests erode trust faster than almost anything else. Fix flaky tests via isolation and stable environments

-

High maintenance → Maintenance overhead compounds as the application changes. Regular reviews and a focus on stable, high-value scenarios keep it manageable

-

Slow execution → Slow execution gets worse as suites grow. Running in parallel and prioritizing critical tests keeps pipelines from becoming bottlenecks

-

Unrealistic environments → Simulating real behavior is harder than it looks. Most test environments don’t reflect production accurately. Testing with real traffic or replayed interactions addresses this more directly than synthetic environments

None of these challenges are reasons to avoid automation. In fact, these are reasons to be thoughtful about how you build it.

The Future of Automated Regression Testing

The focus of regression testing is shifting from volume to meaningful validation. Instead of running thousands of tests blindly, teams are moving toward:

-

Smart test selection- Prioritizing tests based on risk and recent changes.

-

AI-driven prioritization- Leveraging machine learning to optimize coverage without slowing pipelines.

-

Production-aware testing- Validating real system behavior rather than synthetic test cases.

-

API-level validation- Ensuring backend logic and workflows remain stable across releases.

Keploy’s approach, replaying real API interactions, exemplifies this future. It allows teams to validate workflows continuously while reducing flaky regression cycles and dependency on staging.

Conclusion

Automated regression testing is about building trust in every release. Speed only matters if you’re confident the changes are validated.

Effective regression testing isn’t about:

-

Coverage percentages

-

Test counts

It’s about:

-

Reliable feedback

-

Fast enough to act on

-

Covering the things that actually matter

The teams doing this well are:

-

Testing real behavior

-

At the API layer

-

With short feedback loops

-

Using small, focused suites they actually trust

By focusing on relevance, real-world validation, and efficient workflows, teams can scale reliably and sustainably.

FAQs

1. Can automated regression testing replace manual testing?

No. Automation handles repetitive validation well. It doesn’t replace exploratory testing or edge-case investigation.

2. How often should automated regression tests run?

On every code commit, or at minimum once per CI/CD cycle.

3. What is the difference between regression testing and retesting?

Retesting verifies that a specific defect has been fixed. Regression testing checks that the fix has not broken anything else in the system. Both are often run after a bug fix, but they serve different purposes. Retesting is targeted; regression testing is broad.

4. How do you reduce flaky tests in regression suites?

-

Use real data mocks

-

Test in isolated containers

-

Track flake rates weekly

Add retries only as a last resort – they hide the problem rather than fixing it.

5. Does automated regression testing scale for serverless architectures?

Yes. Focus on API endpoints. Tools that replay real traffic work well here because you’re not depending on infrastructure state.

Leave a Reply